Does Cardinal, Hanson, and Wakeham’s 1996 Study Prove Authorship in FC? Part 5 (What FCed Errors Tell Us About Facilitator Influence)

Today’s blog post is the fifth in a series reviewing a 1996 article titled “Investigation of authorship in facilitated communication.” (Links to prior blog posts below). The Cardinal, Hanson, and Wakeham study is one of the top most-cited articles on proponent lists claiming to “prove” communication independence in facilitated communication (FC). Proponents cite this article, it seems, because of the number of participants (43 started the study and 41 ended the study), but there are serious flaws with the design, starting with the fact that, despite Cardinal et al.’s stated intent to blind facilitators from test stimuli, the researchers gave the facilitators open access to the list of 100 words used in the study. In this way, the authors sabotaged their own study.

As Wegner and Sparrow’s article “Clever Hands: Uncontrolled Intelligence in Facilitated Communication” suggests, facilitators who have access to the test stimuli can’t help but (unconsciously or semi-consciously) lead their clients to the correct responses. A more rigorously designed test would have controlled facilitator access to the word list, allowing for participants to demonstrate their language and literacy skills without interference from the facilitators and removing facilitators’ (non-conscious) impulses to “help” their clients spell out the desired answers.

Does Cardinal et al.’s study prove proponent claims of communication independence in FC-generated messaging? Nope. (Image by Daniel Herron)

The Cardinal et al. study shared some characteristics with the reliably controlled testing that was conducted in the early-to-mid-1990s:

They chose facilitator-student pairs that worked well together outside the testing situation,

They conducted the tests in familiar settings,

They chose words (as test stimuli) that were familiar to the participants and age-appropriate

But that’s where the similarities ended. The researchers in this study:

Gave facilitators (and recorders) open access to the word list used as test stimuli,

Did not control for visual or auditory cueing

Did not include a “distractor” condition which would have controlled for and separated facilitator responses from those of the participants’ responses. In “distractor” conditions typically used in reliably controlled authorship tests, the facilitator and participant are separately shown test stimuli (e.g., a picture or word) and then asked to spell out the picture or word that the participant saw. If the participant knows how to spell and can communicate that independently, then it is expected that the answer(s) will be based on the information shown to the participant. If, on the other hand, the participant does not know how to spell, then the answer(s) will be based on the information shown to the facilitator. “Distractor” conditions are commonly used in reliably controlled testing. It’s interesting to me that Cardinal et al. purposely chose not to include this condition in their study. They claim they left this condition out so participants couldn’t “compete” with facilitators for the answers which, to me, signals that the authors of the study were more aware of the problems with facilitator influence and control over letter selection than they were willing to let on.

Another curious aspect of the Cardinal et al. study was how vague they were about reporting the correct versus incorrect responses of the participants. They used percentages or means to record their findings. Even the number of trials they conducted is in dispute (sometimes reported as “more than 3800 trials,” and sometimes reported as “approximately 3800 trials”). In Cardinal’s (2025) email exchange with me, he indicated there was data left out of the final report and that he was sure he had the data at the time the study was published. Sadly, he couldn’t provide that data to me when I asked or make any further clarification about the test results. All I wanted to know was how many correct vs. incorrect responses were given in each of the conditions of their study (e.g., Baseline 1, Baseline 2, and the “facilitated” condition).

Note: For more information about each of the test conditions, please see my prior blog posts. (links below)

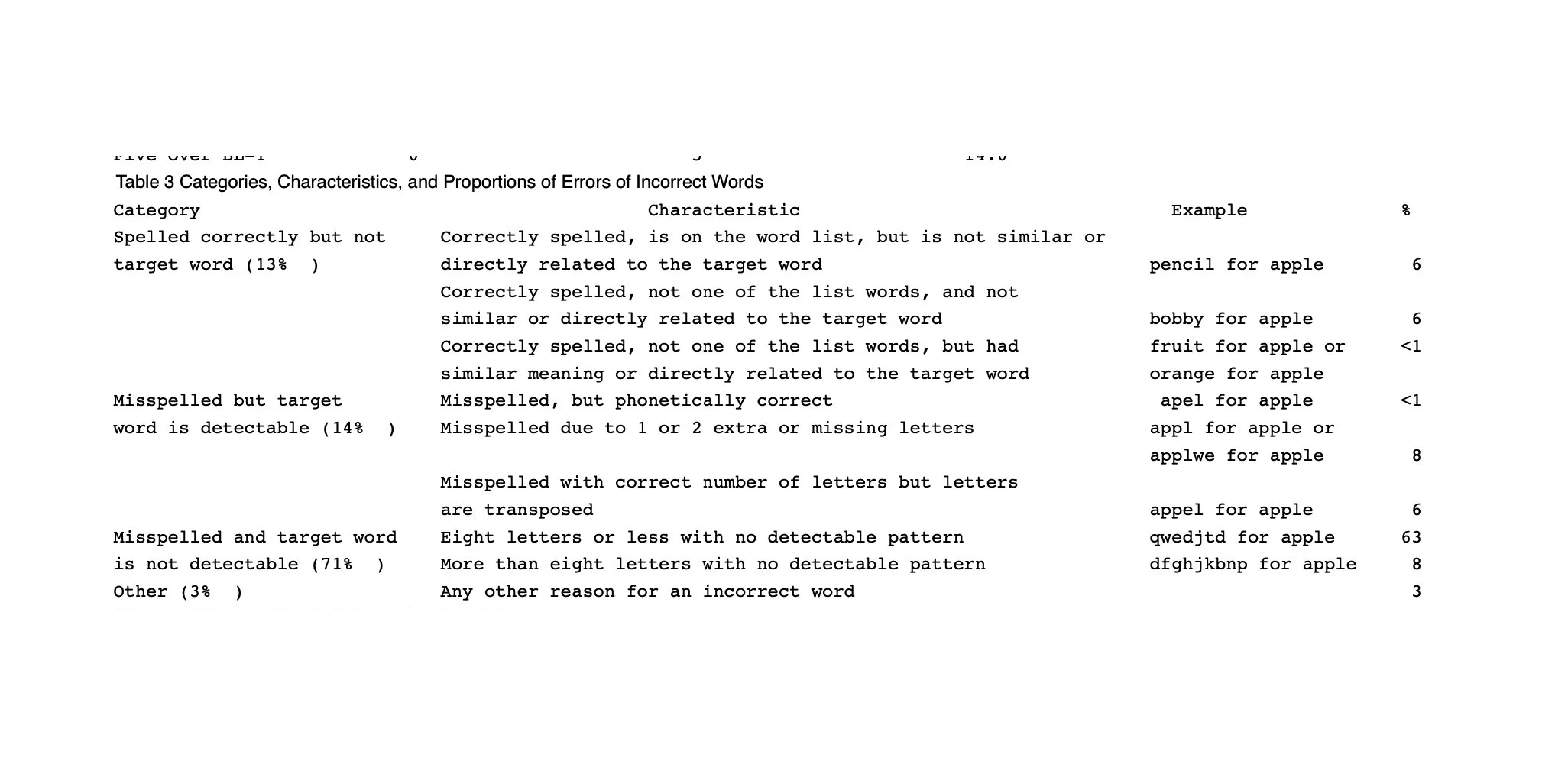

Table 3: Categories, Characteristics, and Proportions of Errors of Incorrect Words (Cardinal et al., 1996)

To me, the most informative piece of information in the study is what Cardinal et al. labeled Table 3: Categories, Characteristics, and Proportions of Errors of Incorrect Words. And while the researchers did not include how many incorrect words out of the “more than” or “approximately” 3800 words spelled out by the participants, by looking at the characteristics of the FC-generated errors, we can gather clues as to the participants’ ability—or inability—to spell independently (e.g., without facilitator interference) and facilitators’ propensity to guess at the answers to test stimuli when they know the words on the list but do not know which of the hundred words their client was asked to spell.

According to Table 3, 13% of the words deemed “incorrect” by the researchers were spelled correctly but were not the target word. Within this group, there seems to be two types of errors:

Words that were correctly spelled and on the word list, but not “similar to or directly related” to the target word. In other words, since the word list was not given to the participants before or during the testing, but facilitators had open access to the word list itself, the “errors” in this category were likely facilitator guesses.

Words that were spelled correctly, not on the word list, but had a similar meaning or were directly related to the target word. It doesn’t make sense to me that a participant who was presented with a word on a flashcard, told what the word was, and told its meaning (by the recorder) would not then be able to spell out the exact target word if he or she knew it—especially given the pattern-recognition skills of many people with autism. Participant circumlocution can’t be ruled out 100%, but errors in this category were likely facilitator guesses.

Facilitator cueing and control over letter selection could have been ruled in or ruled out by including a “distractor” condition in the study.

Image of testing using a “distractor” condition where the facilitator is blinded from images the participant sees (and visa versa). “Distractor” conditions can be implemented without partitions, but this is one of the earliest authorship tests in the U.S. (see Wheeler et al., 1993)

Another type of error included in Table 3 was misspelled words, but the target word(s) were detectable at 14% of the total incorrect words. Within this group, there were several types of errors:

Misspelled words, but phonetically correct. We were told by the researchers that all the words on the word list were selected because they were familiar to every participant—including the youngest in the group. While beginning spellers often use phonetic or “inventive” spelling, we were led to believe by the researchers that all the students could spell all the words in their (unstructured) classroom setting. The use of phonetic or inventive spelling should have been reported in the Baseline 1 condition (e.g., where participants were asked to spell out words without physical touch from the facilitators). Errors of this type during facilitator-dependent letter selection can occur due to the ideomotor response (or unconscious muscle movements). (See my blog post What we can learn about FC, the Pseudo-ESP Phenomenon, and Facilitator Cueing from Kezuka’s “The Role of Touch in Facilitated Communication”).

These errors can also explain the two other types of errors in this group: misspelled words with 1 or 2 extra or missing letters and misspelled words with the correct number of letters but the letters are transposed. Reliably controlled testing should include a complete speech/language evaluation (including written language skills) conducted without interference of the facilitator to document participants’ individual and independent understanding and use of spoken, nonverbal, and written language skills.

A third type of error included on Table 3 included words that were both misspelled and the target word was not detectable. This accounted for 71% of the total incorrect words. That’s a significant number of incorrect responses that makes me think it’s no wonder that the authors tried to hide the test results by recording their findings as percentages or averages instead of revealing the true number of incorrect responses out of the “approximately” 3800 trials that were completed. It’s likely the facilitators had overestimated their clients’ independent language and literacy skills, as has been documented as a phenomenon in some of the earliest authorship studies.

The authors split this category into two separate groups: strings of letters with eight letters or less with no detectable pattern and more than eight letters with no detectable pattern. In other words, the participants didn’t know how to spell. To me, this indicates that at least some of the facilitators during these sessions may have been resisting the urge to “assist” the participants in spelling out words. It also raises questions about the participants’ understanding and use of written language (questions that could have been answered with a complete and independent speech/language evaluation):

Did the participants have the comprehension skills to understand the target words presented to them (outside the visual and auditory range of the facilitators) and did they have the comprehension skills to understand what they were supposed to do (e.g., spell out the target words)?

Did the participants have letter-sound recognition and an understanding of how to put those letters into sequences to make meaningful words?

And, finally, the other 3% of errors that made the Table 3 list were categorized as “any other reason for an incorrect word.” The researchers didn’t provide an example of words in this category, so we’re left guessing as to what these types of errors could be but, at this point, I don’t think it matters all that much. We’ve seen that the errors made by the facilitated participants in this study can be attributed to facilitator influence and control (however inadvertent) and/or the fact that the participants didn’t have the language and literacy skills to be proficient in written language.

It’s quite possible that others can analyze the Cardinal et al. study and find additional flaws in the design of the test and/or ways to interpret the reported results of each condition to clarify just how poorly the participants did in spelling out common words when

participants were purported to know how to spell the 100 words included in the FC study (and use the words in sentences) as part of their regular educational programming

participants were asked to spell out these common words with a familiar facilitator in a familiar setting

the facilitators were given open access to the test stimuli

I’m going to wind up my review of this study. To me, analyzing the errors charted in the Cardinal et al. study proved that facilitator influence and control over letter selection affected the participants’ performances—even without a distractor condition or documentation of the total correct vs. incorrect responses in the trials.

In my 2025 email exchange with him, Cardinal denied that this was an authorship study (although “authorship” is in the title of their report). He claims there was data left out of the final report that would have clarified the questions I had about it (namely the exact number of correct and incorrect responses for each participant in each of the conditions). I’m not sure why Cardinal wouldn’t want to publicly clarify his position (e.g., that information was left out of the report, or that the way the data was reported was confusing to readers, or that the study was not actually an authorship study). If he knew his original report contained errors or misinformation, why didn’t he address that either during the initial editing process or in a later article? From my perspective, the 1996 report most likely contained the exact information the researchers wanted to present to further their pro-FC stance. (Cardinal remains a believer in FC as an independent form of communication that works through the trust-bond between facilitator and client, despite the large body of research proving otherwise).

In summary, I believe this study raises more questions than it answers about how facilitators convince themselves that they are not influencing letter selection. I’m not saying that all facilitators purposely control letter selection. Most are unaware of the extent to which their own behaviors contribute to the “spelling” activity. I think it’s a pity that, with some small tweaks to the design of the study, Cardinal et al.’s investigation of FC could have included a reliable test for authorship. But, alas, the researchers seemed to sabotage their own study by giving facilitators open access to the words used in the trials. Did the researchers not trust their facilitators or FC to produce independent communication? Why did they omit the “distractor” condition? Maybe, deep down inside, the researchers knew what would happen if their clients were left to spell out words on their own (e.g., without interference from the facilitators).

In my opinion, the Cardinal et al. study is just one more poorly designed attempt and squandered opportunity for pro-FC researchers to provide the scientific community with reliably controlled evidence to back up their claims that FC represents more than just the thoughts of (literate) facilitators who “support” their clients during letter selection using physical, visual, and auditory cues.

Blog posts in this series

Does Cardinal, Hanson, and Wakeham’s 1996 Study Prove Authorship in FC? Part 2 (Test Design)

Does Cardinal, Hanson, and Wakeham’s 1996 Study Prove Authorship in FC? Part 3 (Competing for words)

Does Cardinal, Hanson, and Wakeham’s 1996 Study Prove Authorship in FC? Part 4 (Facilitator Behaviors)

References and Recommended Reading

Cardinal, DN, Hanson, D, Wakeham, J. (1996). Investigation of authorship in facilitated communication. Mental Retardation. Vol 34 (4), pp 231-242.

Kezuka E. (October 1997). The Role of Touch in Facilitated Communication. Journal of Autism and Developmental Disorders, 27(5), 571-593. DOI: 10.1023/A:1025882127478

Spitz, H. (1997). Nonconscious Movements: From Mystical Messages to Facilitated Communication. Routledge. ISBN 978-0805825633

Wegner, D.M., Fuller, V.A., and Sparrow, B. (2003, July). Clever hands: uncontrolled intelligence in facilitated communication, Journal of Personality & Social Psychology, 85 (1), 5-19. DOI: 10.1037/0022-3514.85.1.5

Wheeler, DL, Jacobson, JW, Paglieri, RA, and Schwartz, AA. (1993). An experimental assessment of facilitated communication. Mental Retardation. Vol 31 (1), 49-60.