Does Cardinal, Hanson, and Wakeham’s 1996 Study Prove Authorship in FC? Part 4 (Facilitator Behaviors)

Today’s blog post is the fourth in a series featuring a 1996 article titled “Investigation of authorship in facilitated communication.” This is one of the top studies that proponents include on pro-FC lists as “proof” of authorship in FC.

I will provide links to the previous blog posts in this series below.

Image by Agence Olloweb

To review quickly, Cardinal et al. stated that they wanted to 1) develop a protocol that controlled for variables that could threaten the study’s validity (e.g., “blind” the facilitators); 2) allow participants to communicate their thoughts without over-controlling the situation (and potentially jeopardizing the user-facilitator relationship); and 3) promote a “naturally” controlled environment during testing.

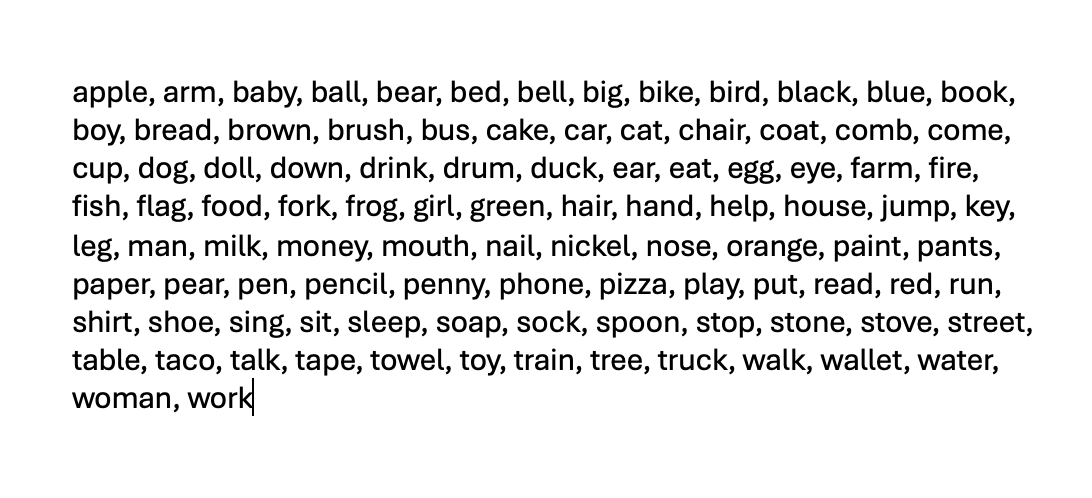

As we saw from the previous blog posts, Cardinal et al. essentially sabotaged their own goal of developing a protocol that “controlled for variables that could threaten the study’s validity” by giving facilitators and the people documenting the facilitated messages (called recorders) in the study access to the word list being used for test stimuli. It is likely that the facilitators were sincere in their belief that they could support the participants during FC without drawing on their knowledge of the words on the list, but we know from past scientific studies that verbal, physical, and visual cueing can and does occur unconsciously (or semi-consciously) both when facilitators are touching the wrists, elbows, shoulders (or other body parts) of their clients and when they hold the letter board in the air.

The study included three separate activities: Baseline 1, a “Facilitated Condition,” and Baseline 2. Baseline 1 and 2 were, purportedly, unfacilitated activities where participants were asked to spell out words by touching a laminated photocopy of “alpha and QWERTY” letterboards in upper case letters. Cardinal et al. reported that most of the participants also used electronic devices, so it’s interesting to me that the researchers chose to use laminated letter boards in the study. Laminated letter boards make determining the accuracy (or inaccuracy) of letter selection more difficult to detect for reasons I will elaborate on in a moment.

Nevertheless, In these two conditions, facilitators were not allowed to physically touch the participants. The goal for the baseline conditions was to see how many words the participants could type out on their own. In Baseline 1, none of the participants could spell more than one word out of 5 in any of the trials. Baseline 2 was harder to interpret because the researchers failed to include in their report how many correct or incorrect responses there were in any of the trials. From what I can tell, the participants were not given direct instruction on how to spell any of the words presented in the testing, so I wonder how any of the participants could achieve a success rate of more than 1 out of 5 words (scores from the most “successful” participants from Baseline 1) without facilitator “assistance” (e.g., cueing).

Note: Other types of cueing (e.g., visual and auditory) during letter selection were not addressed in the report. (See my blog post An FC Primer for more information about the types of physical and non-physical cueing that occurs in FC and its variants)

For these two baseline conditions, it is not clear to me why, if the students were able to spell independently (e.g., without interference from a facilitator), a facilitator needed to be present in the room—except that the facilitators all had open access to the word list used in the testing. Proponents, likely, would argue that the facilitator’s presence was necessary for “emotional” support, but Cardinal et al. mentioned that all of the facilitators and recorders were known to the participants. The activities were familiar to the participants as well and carried out in a classroom the participants regularly visited. Wouldn’t the presence of the recorder be enough reassurance for the participants to spell out common words that they, purportedly, were successfully using in their academic (classroom) activities?

The “Facilitated Condition” involved having the recorder show the participant a target word written on a card while the facilitator was out of visual and auditory range. The recorder also spoke the word allowed and explained the meaning of the word to the participants. Then, the facilitators were brought back into the testing room.

In the “Facilitated Condition,” the facilitators were allowed to physically touch the participants and “spell” via FC in their “natural” (or usual) way. The Cardinal et al. report didn’t include information about the type of physical support each participant required, but typically touch-base FC “support” means that the facilitator holds the person’s wrist, elbow, shoulder or other body part (e.g., neck, leg) during letter selection. Physical cueing can be obvious in some cases or very subtle (e.g., barely detectable to the naked eye), so the only way Cardinal et al. could have ruled out physical cueing with any certainty in any of the test conditions would have been to control for facilitator behaviors (and thus separate facilitator behaviors from those of the participants).

Cardinal et al. believed that the chance of the facilitator guessing the correct word was 1 in 100, but, since the facilitators had open access to the word list, once an initial letter was selected (even by accident), those odds decreased and the chances of facilitators guessing the target word was significantly increased. (See my last blog post for a more detailed explanation).

The most reliable way to control for facilitator behaviors is to prevent them from accessing test stimuli (in this case the target word list). To make sure the recorders weren’t inadvertently providing cues to the participants or to the facilitators, their behavior, too, would have to be controlled. But, because Cardinal et al.’s protocols for the testing did not include such controls, influence through physical, visual, and/or auditory cueing cannot be ruled out.

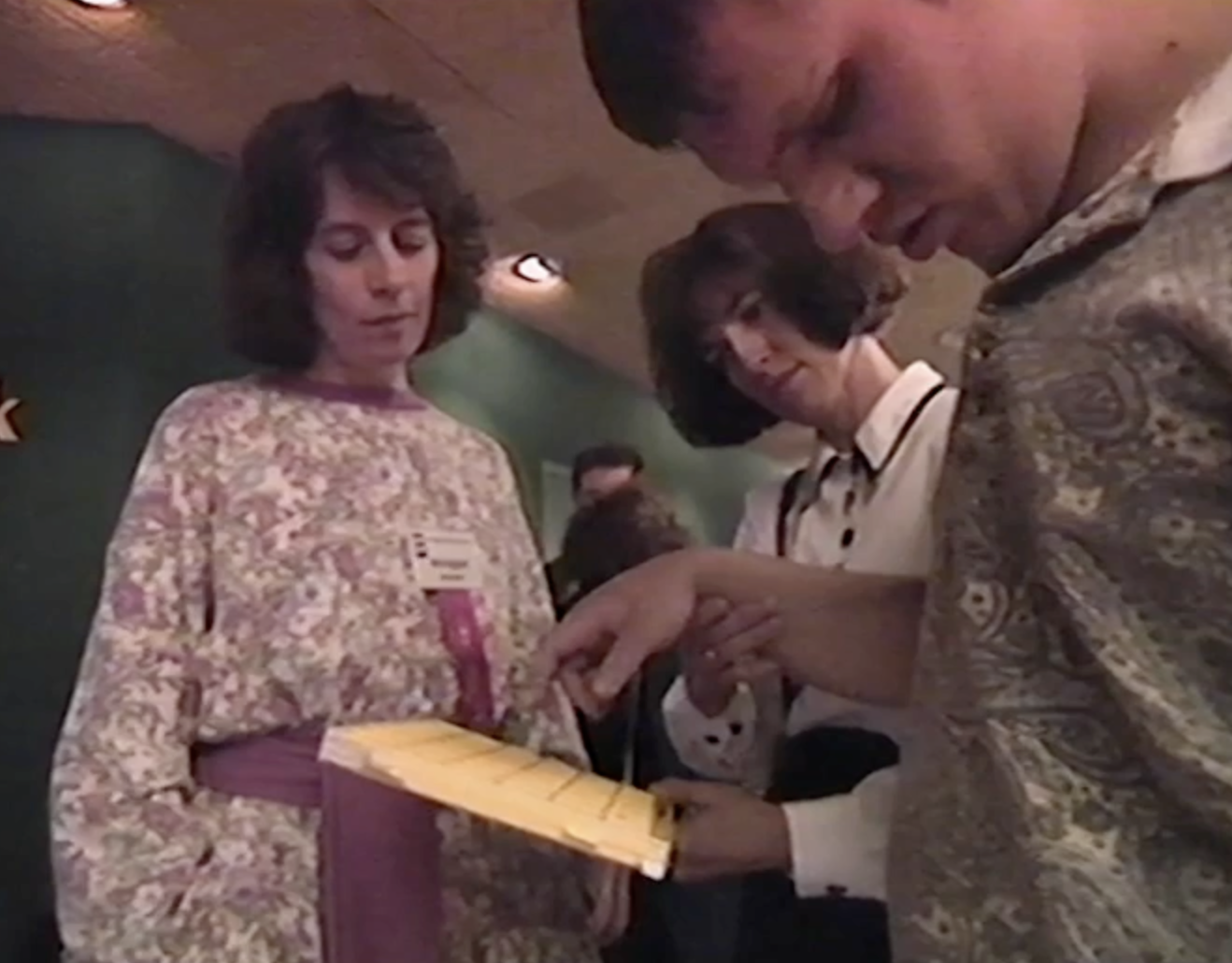

Syracuse-trained master facilitator Annegret Shubert looks on as the facilitator holds the board in the air and selects letters while her client has his eyes closed. (from Prisoners of Silence, 1993).

As I was writing this blog post, it occurred to me that the researchers do not say in their report if the facilitators were holding the letter board in the air. When facilitators hold the board in the air, they can’t help but move the board. Most facilitators are unaware of the extent that they do so, particularly when they’re actively engaged in asking and answering questions, calling out letters, prompting their clients to look at the board or keep going, etc. As we’ve seen in analyzing pro-FC Youtube videos and films, facilitators (often inadvertently) move the board up/down, left/right to aid in letter selection—particularly when they know (or think they know) the word being spelled out. (See Wegner and Sparrow’s Clever Hands: Uncontrolled Intelligences in Facilitated Communication). Stabilizing the letter board by placing it flat on a table or mounting it to a stationary stand reduces or eliminates the problem of facilitator cueing caused by movements of the board in the air. It does not, however, completely solve the problem of physical, visual and verbal cueing.

Although low-tech “devices” (including letter boards) can be used as part of legitimate Augmentative and Alternative (AAC) programming, with FC there is generally no interaction between facilitators and their clients to confirm that the letter the facilitator is calling out is the one the client wants. With evidence-based methods (like E-tran) the assistant confirms each letter selection by eliciting a confirmation from the client. This confirmation can be verbal (yes or no) or non-verbal (head nods or shakes, eye blinks, raised fingers, etc.). Facilitators rarely, if ever engage with their clients in this way, as they’re taught in FC workshops to presume competence and that questioning clients (even to confirm letter selection) threatens to break the facilitator-client trust bond. Given the limited information provided in the Cardinal et al. report, there is no way to determine if facilitators were holding the letter boards in the air and if participants were provided an opportunity during the trials to independently confirm letter selection through verbal or non-verbal means.

Facilitators using laminated, photocopied letter boards also frequently make mistakes calling out letters that they think participants have selected. Because there is no printout to chart letter selection and letter selection is often so rapid that facilitators don’t have time to process whether the individual touched on a letter, beside a letter, or in the space between letters, facilitators can think they’re accurately calling out letters when they are not. By analyzing YouTube videos with facilitators using plastic letter boards, we’ve learned (anecdotally) that participants tend to repetitively select letters in the upper middle range of the letter boards (probably because that is the area of the board that most comfortably matches their range of motion) and facilitators, with surprising regularity, call out letters and numbers on the periphery of the letter board that the participants have not touched.

In many pro-FC YouTube videos and films, we also see facilitators calling out letters when the participants are not looking at the letter board. Proponents claim almost supernatural visual abilities in their clients to explain away the problem. Central vision, not peripheral vision is required to discern letters on a letter board (peripheral vision is designed to detect motion and changes in light and dark). And, even when an individual is able to detect broad cues (e.g., hand signals) via peripheral vision, it is not possible for individuals being facilitated to see letters being selected when he or she his or her eyes closed and/or is turned completely away from the letter board. Cardinal et al. study did not mention if the participants in their study were looking at the letter board and/or had their eyes open during facilitation.

Note: I recently read a systematic review about vision problems in individuals with Autism Spectrum Disorder (ASD). The authors reported that narrowed peripheral vision has a greater prevalence in people with ASD than those who do not. Narrowed peripheral vision makes letter selection harder (because of a greater reliance solely on central vision) and seems to discredit proponent claims that their clients have almost superhuman peripheral vision.

We don’t know the error rate of the letters called out by the facilitators in the Cardinal study because, as far as I know, there are no videos to analyze. Even if the observers in the study could have detected such errors with the naked eye (it’s not always possible), the observers, tasked with monitoring the implementation of test protocols, were only present about one-third of the time. Cardinal et al. reported that 70 of the trials were eliminated because of faulty data collection methods and/or failure to use the proper technique for random word selection. There is no way to know whether these same errors occurred when the observers were not present to witness the testing situation. And, while we can assume that facilitators, recorders, and observers were all sincere about performing their assigned tasks, it’s not possible from reading the Cardinal et al. study to know whether the letters facilitators called out during the trials were the ones selected by the participants. Nor is it possible to know whether the letters called out by the facilitators were accurately transcribed by the recorders.

Word list used in the Cardinal et al. study

Cardinal et al. stipulated that a correct response was scored only if it was both spelled perfectly “with no extra or repeated letters in front of, after, or within the word.” This standard was selected because of its unambiguity. A word was spelled correctly or not. It matched the target word shown to the participant or not. There was no need for interpretation of the responses.

And while I appreciate the “cut and dry” nature of this stipulation, I wonder about beginning spellers who use phonetic or “invented” spelling in their early written work. A neurotypical beginning speller might, for example, spell the word “phone” as f-o-n. They wouldn’t necessarily know that /ph/ sounds like /f/ in English or that the word would end with a silent /e/. On the other hand, proponents (erroneously) claim that nonspeaking or minimally speaking individuals with autism have intact literacy skills, so, perhaps the (well-documented) developmental stages of written language don’t apply to individuals using FC who are paired with literate facilitators.

I find it curious, too, that letters that were supposedly deleted and corrected during facilitation were not counted as inaccurate (provided the resulting word was spelled correctly). This makes sense when using a typewriter (as was most likely available in the 1990s) or a keyboard. But for as many YouTube videos I’ve looked at and analyzed, I can’t think of one instance where a delete key was used on a plastic laminated letter board or stencil in the same way as it would be on an electronic keyboard. Not to say that it can’t happen, but I wasn’t taught that as a facilitator (in the early 1990s) and I’ve never seen an example of it throughout the years I’ve been analyzing videos of FC sessions. Rarely does a facilitator check for accuracy in spelling and if, by chance, their client appears to be perseverating on a letter, the facilitator (and not the client) makes the correction by either pulling the client’s hand away or pulling the letter board away.

In my experience and observations, most facilitators presume competence in their clients and assume that every letter they (the facilitators) call out is “correct.” Given all that is known and documented in the scientific community about facilitator authorship in FC (see Controlled Studies and Systematic Reviews), I’d suggest that perhaps it’s not in the best interest of nonspeaking, profoundly autistic individuals (and others who are subjected to facilitator-dependent techniques) to presume competence in facilitators’ abilities to support their clients without directly and non-directly interfering with letter selection through physical, visual, and/or auditory cueing.

So, with these questions about facilitator cueing and control over letter selection in mind, and with an apparent breach of Cardinal et al.’s own goal of blinding facilitators from test stimuli, in my next blog post, I’ll focus on the types of errors made during facilitation (as documented in the report) that give us clues as to the abilities of participants to spell (or lack thereof) and the (perhaps unconscious or semi-conscious) impulse for facilitators to guess at the correct answers during the spelling sessions.

Blog posts in this series (Links will be added once the blog posts are published)

Does Cardinal, Hanson, and Wakeham’s 1996 Study Prove Authorship in FC? Part 1 (Rudimentary Information)

Does Cardinal, Hanson, and Wakeham’s 1996 Study Prove Authorship in FC? Part 2 (Test Design)

Does Cardinal, Hanson, and Wakeham’s 1996 Study Prove Authorship in FC? Part 3 (Competing for words)

Does Cardinal, Hanson, and Wakeham’s 1996 Study Prove Authorship in FC? Part 5 (What FCed Errors Tell Us about Facilitator Influence)